The recent market turbulence and changes in the business world have brought to light many gaps in the supply chain. Remote work, unexpected increased demand, logistics complexity have put supply chains in the spotlight like never before. Many businesses have been affected by commodity volatility, changing demand forecasts, and supplier-specific challenges. By putting data analytics to work, companies can fine-tune their supply chains in ways that simply were not possible in the past. Big data and predictive analytics in supply chain management allow retailers, suppliers, and manufacturers to make the supply chain more resilient and efficient. Let’s review some successful big data and predictive analytics case studies in the supply chain that will reveal the potential data and analytics hold.

Supply Chain 4.0

Industry 4.0 offers a smarter approach to supply chain management, including production, procurement, warehousing, inventory management, and logistics. It makes retailers, suppliers, and manufacturers rethink the way they design their supply chains and implement intelligent technology in supply chain management.

Supply chain 4.0 is all about the application of the Internet of Things, robotics, big data and predictive analytics in supply chain management. The main goal is to create a ‘smart’ supply chain that utilizes data from various types of sensors and all the available sources in order to optimize the processes.

“Smart manufacturing processes and workflows generate extremely large volumes of data, but the vast majority of it is useless without ML models and AI workflows to identify, infer, or act upon patterns in the data. Advanced analytics solutions support many IoT use cases, including predictive maintenance and intelligent workload optimization.” - The Forrester Tech Tide™: Smart Manufacturing, Q2 2020, Forrester Research, Inc., April 1, 2020

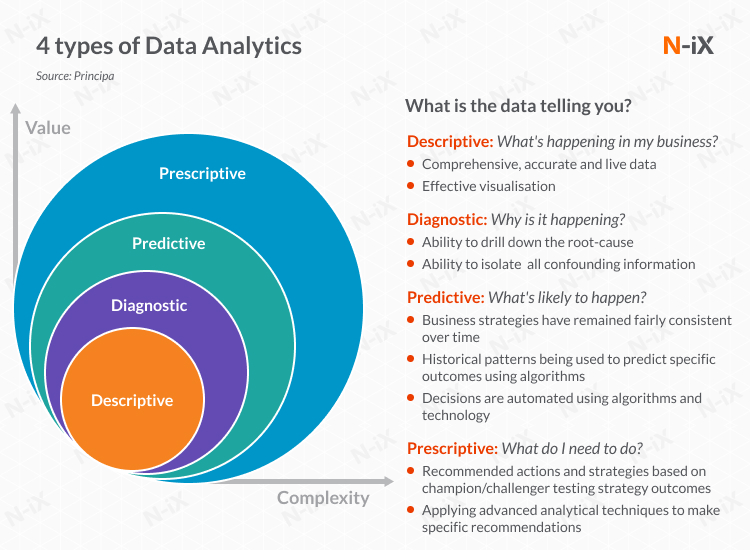

Data analytics helps process this huge volume of data and drive valuable insights. There are different types of data analytics:

- Descriptive analytics helps interpret historical data to better understand changes that have occurred in a business.

- Diagnostic analytics gives the ability to identify the root-cause. It is characterized by techniques such as drill-down, data discovery, data mining, and correlations.

- Predictive analytics analyzes historical patterns to predict specific outcomes using algorithms.

- Prescriptive analytics offers recommended actions and strategies to solve issues based on the computational findings of algorithmic models.

In the supply chain, the time has come to shift from mere descriptive and diagnostic analytics to predictive and prescriptive analytics. With the help of Big Data and Data Analytics, it is possible not only to describe what is happening and why but also forecast issues and actually solve them.

So why do manufacturers, suppliers, and retailers around the globe are investing in predictive analytics?

Benefits of big data and predictive analytics in supply chain management

Companies are ready to allocate billions in predictive analytics in the supply chain to ensure greater visibility into operations, reduce costs, and drive better performance. Here is the list of the main advantages of big data and predictive analytics in supply chain management:

- Demand forecasting

- Delivery prediction

- Predictive maintenance of equipment

- Predicting customer preferences

- Offering customer-specific services and products

- Reducing customer churn

- Preserving margins

- Making more-informed business decisions

- Identifying opportunities to make more money

- Preventing supply chain interruptions

- Identifying hidden inefficiencies

- Predicting and mitigating risks

- Pricing analytics

- Predicting suspicious behavior

Supply chain and predictive analytics: case study #1

About our client

Our client is a Global Fortune 100 multinational engineering and technology company based in Germany. The company operates through a complex network of over 440 subsidiaries and regional entities in over 60 countries worldwide.

The challenge:

Being a leading global supplier of technology and services, our client has many factories, warehouses, and suppliers, as well as a lot of raw materials and finished goods, which circulate among them. To improve the logistics between warehouses in different countries, the client introduced an internal logistics platform. The platform is used by warehouse staff to efficiently allocate and manage goods and materials. However, after being in use in a few warehouses for several months, the platform turned out to have a lot of flaws and was unsuitable for further scaling.

Leveraging predictive analytics and ML for supply chain management

The client has partnered with the N-iX specialists to modernize and build a scalable logistics platform. The solution is in the development phase. The modernized and scalable logistics platform will significantly improve the efficiency of warehouses in over 60 countries, reducing operational overhead and warehouse downtime.

Migrating to microservices

The core reason why the platform was not scalable and inefficient was its monolithic architecture. Therefore, our Solution Architect designed and presented a new cloud-native infrastructure of the platform based on Azure Kubernetes, along with the suggested tech stack and the most efficient roadmap.

DevOps best practices

The need for DevOps expertise was identified as another customer pain. Therefore, we are building the DevOps pipeline from scratch, setting up the environment for development and QA in Azure, and introducing CI/CD processes that allow us to easily assemble and deploy microservices to the environment.

Computer Vision solution

The core component of this project is the Computer Vision (CV) solution for docks that allows contactless tracking of goods with industrial optic sensors and Nvidia Jetson devices. Our client had CV algorithms written by another vendor, which were inefficient and unsuitable for production. Therefore, we found a top-notch CV expert with a Ph.D. degree to run the CV workstream. After careful examination of the existing algorithms, we decided to redevelop them completely. We changed the architecture of the solution and introduced Continuous Delivery for Machine Learning, which allows implementing continuously repeatable cycles of training, testing, deploying, monitoring, and operating the ML models. That is especially important given the global scale at which our client is operating.

Multiplatform CV mobile app

Also, our team designed the architecture of the multiplatform Computer Vision mobile app and is responsible for its end-to-end development. The app covers object detection, package damage detection, OCR, and NLP for document processing.

Benefits:

The modernized and scalable logistics platform will significantly improve the efficiency of internal logistics in a number of ways:

- Automating manual work and reducing paperwork for warehouse staff.

- Streamlining inventory management for 400+ warehouses around the globe.

- Tracking packages almost real-time, effectively managing the delivery statuses of boxes, and predicting warehouse load.

- Package damage detection, thus eliminating defective packages.

- More effective planning, reducing operational overhead and warehouse downtime.

- Cost-efficiency thanks to the possibility to select a cloud provider with the best offering.

Supply chain and big data analytics: case study #2

About our client

Our client is a Fortune 500 industrial supply company. It offers over 1.6 million quality in-stock products in such categories as safety, material handling, and metalworking. Also, the company provides inventory management and technical support to more than 3 million customers in North America.

The challenge:

Being a large-scale industrial supply company, our client needed to efficiently manage large amounts of data, including the data on inventory-related costs. Therefore, the company decided to extend its data warehouse solution which collects data from multiple departments.

For the development and support of the solution, our client hired on-site consultants. However, that caused significant overhead, and the company was looking to reduce these operational costs. Also, our client wanted to migrate the solution to the cloud to make it more scalable and cost-efficient.

Unified cloud-based big data platform for effective inventory management

The project is in the development phase. Our specialists are in the process of building the environment that will be able to process large datasets. The use of Snowflake and Airflow technologies allows us to automate the data extraction process. Also, Snowflake minimizes data duplication by checking whether the ingested files have been already processed or not.

We managed to integrate more than 100 different data sources into a unified data platform. This includes daily data loads, along with a backfill of historical data. We are working with TBs of data tables, and the size is growing.

To migrate from on-premise Hadoop Hortonworks cluster to AWS and allow processing additional data in AWS, the N-iX team built an AWS-based big data platform from scratch. Also, we have been involved in extending and supporting the existing Teradata solution. Teradata is used to collect data from other systems and further generate reports with Business Object and Tableau. The data sources are MS SQL, Oracle, and SAP.

To choose the data warehouse design and the tech stack that fit our client’s business needs, our specialists created a proof of concept. We compared Amazon Redshift with Snowflake and preferred Snowflake as it met the client’s approach of cloud neutrality: it can easily scale up and down any amount of computing power for any number of workloads and across any combination of clouds.

The whole development process is cloud-agnostic and is designed to ensure that the client can easily change the cloud provider in the future. For example, we use Terraform as it is compatible with all cloud vendors - AWS, Azure, and Google Cloud.

Benefits:

- Predictive analytics capabilities of the platform. For example, the finance department will be able to predict the inventory-related expenses.

- Saving infrastructure costs with cloud migration.

- Improved efficiency of data management thanks to the unified data platform that stores all the data in one place.

- Using cloud-neutral technology to avoid a vendor lock-in and benefit from different cloud providers.

- Reducing software development overhead by replacing expensive on-site contractors.

How to select a vendor for a big data and predictive analytics project in the supply chain?

There are several simple things you need to know when it comes to a successful vendor selection:

- Identify your business goals and set clear selection criteria;

- Choose an outsourcing destination (region, country, or city);

- Explore the local IT landscape and make a list of potential candidates;

- Choose a vendor with previous experience in implementing predictive analytics in the supply chain. Review the vendor’s portfolio of big data and predictive analytics case studies in the supply chain.

- A vendor should have profound tech expertise in AI, ML IoT, Big Data, Data Science, and Cloud.

- Negotiate a win-win contract with a vendor you have selected.

Here is a more detailed guide on how to choose the best offshore outsourcing company.

Why choose N-iX for implementing big data and predictive analytics in your supply chain?

- N-iX is trusted in the global tech market: the company has been listed among the top software development providers by Clutch, in the Global Outsourcing 100 by IAOP for 6 consecutive years, recognized by GSA UK Awards, included in top software development companies by GoodFirms.co, and others.

- A pool of 1,900+ experts that have experience working with business cases of different complexity and size.

- A team of 170+ data specialists.

- N-iX has delivery offices in Ukraine, Poland, Bulgaria.

- Expertise in the most relevant tech stack for implementing Big Data engineering, BI, Data Science, AI/Machine Learning solutions.

- N-iX company has been selected as a finalist in two categories for the European IT & Software Excellence Awards 2020.

- N-iX is a certified AWS Select Consulting Partner, a Microsoft gold certified partner, a Google Cloud Platform Partner, an Opentext Services silver partner, and a SAP partner;

- N-iX is compliant withISO 9001, ISO 27001, PCI DSS, and GDPR standards;

- N-iX partners with Fortune 500 companies helping them make the most of big data and predictive analytics in supply chain management. We have proven experience in data engineering and data analytics supported by real-life big data and predictive analytics case studies in the supply chain.

Have a question?

Speak to an expert