According to Verizon's 2025 Data Breach Investigations Report, exploitation of software vulnerabilities as an initial breach vector rose 34% year over year. Now it accounts for 20% of all data breaches, nearly as common as stolen credentials. That figure has tripled over two years. The vulnerabilities being exploited were not zero-day issues discovered by advanced attackers. Most of them were known, documented weaknesses in systems that organizations had months to address. The process to find and remediate them was either absent, incomplete, or never audited.

That pattern repeats across industries and organization sizes. Systems get breached, projects run over by months, and compliance gaps emerge in production. When teams investigate root causes, the answer is rarely a rogue engineer or a technical failure. It's a software development lifecycle that was never properly examined: despite conducting software audits, no one reviewed whether the process itself was sound.

An SDLC audit is what closes that gap. It's not a bureaucratic formality or a box to check before a regulatory review. Done properly, it gives organizations a clear, evidence-based picture of whether their software engineering process is structured to produce safe, reliable, and compliant software—and where it isn't. This guide covers what an SDLC audit is, why it matters to business outcomes, and what a structured audit process looks like in practice.

What is an SDLC audit?

An SDLC audit is a structured review of an organization's software development lifecycle—the end-to-end process by which software is planned, built, tested, deployed, and maintained. The audit examines whether that process is documented, coіnsistently followed, appropriately controlled, and aligned with the organization's security, quality, and compliance obligations.

It is not the same as a code review, a penetration test, or a security scan. Those examine outputs. This audit examines the process that produces them. The distinction matters because a single code review tells you whether a specific piece of software is secure today. An audit of SDLC tells you whether your development process is capable of producing secure software consistently, across teams, projects, and time.

The scope of an audit of SDLC typically covers:

- Requirements management (how requirements are gathered, validated, and traced through delivery);

- Design and architecture review (whether security is built in from the start, not added later);

- Development practices, including coding standards and AI tool governance;

- Testing and QA strategy;

- Change management controls;

- Deployment and release procedures;

- Incident and defect tracking;

- Compliance documentation.

This type of audit can be conducted internally by a dedicated quality or governance function, or externally by an independent third party. External audits are typically required when compliance certification is at stake or when the organization needs an objective view of process maturity without internal bias.

Related: Software development governance: All you need to know in 2026

Why SDLC audits matter to business outcomes

Most delivery problems that reach the executive level, for instance, a project running months over, a security incident, or a failed compliance review, were visible long before they escalated. The process that should have surfaced them earlier either didn't exist or was never examined.

Software risk accumulates in the process, not just the code

Most software failures that reach production don't originate in the code itself. They originate in the process that produced the code: a testing step bypassed under deadline pressure, a change that was never formally reviewed, a security requirement that existed in a document but was never wired into any development gate.

A 2024 analysis by Synopsys found that 84% of codebases contained at least one open-source vulnerability, and the majority of those vulnerabilities were introduced through process gaps, such as outdated dependency management, incomplete scanning, or no formal policy governing open-source use. The process was the problem. An audit of SDLC finds those gaps before they find you.

Compliance increasingly requires process evidence, not just outcomes

Regulatory frameworks across fintech, healthtech, defense contracting, and data-sensitive industries don't just ask whether your software is compliant. They ask whether your process for building software is compliant. Standards including ISO 27001, SOC 2, IEC 62304, PCI DSS, and the EU Cyber Resilience Act require organizations to demonstrate that security, quality, and auditability are built into the development process with documented evidence, not retrospective assertions.

An SDLC audit produces that evidence systematically. It maps the current process against the relevant standard, identifies gaps, and creates a documented baseline that regulators and auditors can verify. Organizations that audit their SDLC regularly typically spend significantly less time and money on compliance reviews because the documentation and controls are already in place.

AI-assisted development has introduced new process risks that most audits don't yet cover

As of 2025, over half of professional developers use AI development tools daily. That adoption has measurably accelerated delivery timelines. It has also introduced a class of process risk that most SDLC frameworks and audit checklists have not yet caught up with. For example, AI-generated code that passes review because reviewers assume it was human-authored, IP and licensing exposure from training data provenance, and compliance gaps where AI tools are used without organizational policies governing their output.

A mature audit in 2026 includes an explicit assessment of how AI tools are integrated into the development process, what governance exists around their use, and whether the review and testing gates designed to catch human errors are also equipped to catch AI-generated ones.

Delivery problems that look like team failures are often process failures

When a project runs late, the explanation is often the same: requirements changed, the team underestimated complexity, or a key engineer left. These are symptoms. The underlying cause is usually a process with no formal change management, no structured estimation methodology, or no cross-training protocol—risks that an audit would have surfaced months earlier.

According to the Standish Group's Chaos Report, a significant proportion of software projects still finish late, over budget, or are abandoned entirely. The differentiator between organizations that consistently deliver and those that don't is rarely talent. It's process discipline, and whether that discipline is audited and enforced.

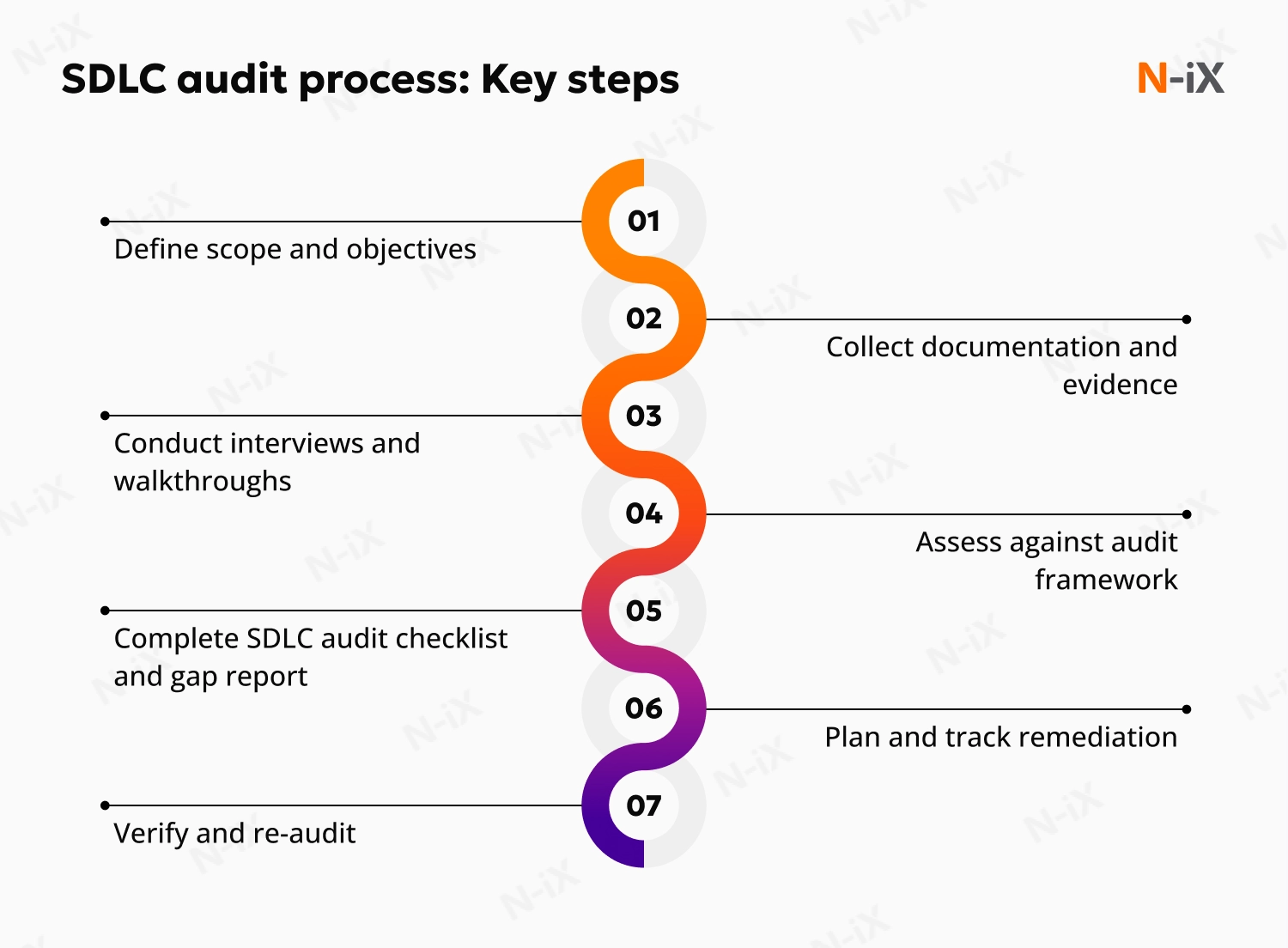

The SDLC audit process: What are the key steps?

An audit of SDLC follows a structured sequence of steps. The specific procedures vary depending on scope, industry, and whether the audit is internal or external, but the core logic is consistent.

1. Defining scope and objectives

Before the audit begins, the organization and the audit team agree on what is being examined, to what standard, and for what purpose. A compliance-driven audit for ISO 27001 certification looks different from an internal process maturity review before scaling a development team.

Scope decisions at this stage include which teams and projects are covered, which lifecycle phases are in scope, the regulatory or quality framework the process will be measured against, and whether the audit will produce a gap analysis, a formal attestation, or both.

Getting the scope wrong at this stage is the most common reason audits produce findings that no one can act on. An audit that tries to cover everything produces a report too broad to prioritize. An audit with undefined objectives produces findings without context.

2. Documentation and evidence collection

The audit team reviews all process documentation relevant to the scope: development policies, coding standards, testing protocols, change management procedures, access control configurations, incident logs, and deployment records. This is not about whether the documentation is aesthetically complete. It's about whether the documented process reflects how work is actually done.

Discrepancies between documented process and actual practice are among the most common and consequential findings in audits of SDLC. A change management policy that requires two-stage approval but is routinely bypassed under deadline pressure is not a change management control, but a false assurance.

Evidence collected typically includes version control history, pull request and code review logs, automated test results, defect tracking records, deployment pipeline configurations, and access control audit logs.

3. Interviews and process walkthroughs

Documentation tells auditors what the process is supposed to be. Interviews and walkthroughs tell them what it actually is. The audit team conducts structured interviews with developers, architects, QA leads, security engineers, and project managers. The goal is not to identify individual failures, but to understand where the process holds and where it doesn't.

The questions are practical: How is a new requirement formally accepted into the development backlog? What happens when a security vulnerability is found in a dependency the day before a release? Who has the authority to approve a production deployment, and what checks must be completed first?

The answers reveal process maturity more reliably than any documentation review, because they surface the informal workarounds and judgment calls that documentation never captures.

4. Assessment against the audit framework

The audit team maps findings against the relevant standard or internal maturity model. This may be a formal framework, such as OWASP SAMM, NIST SP 800-64, or CMMI for development maturity, or an organization-specific set of controls. Each control is assessed as present and effective, present but ineffective, or absent.

Next, findings are categorized by severity: critical gaps that represent immediate risk, significant gaps that require planned remediation, and observations that represent optimization opportunities rather than control failures.

5. SDLC audit checklist completion and gap report

The formal output of this phase is a completed SDLC audit checklist and a gap report. The checklist provides a structured record of every control examined, its assessed status, and the evidence that supports that assessment. The gap report translates those findings into business-language risk descriptions with recommended remediation actions, owners, and timelines.

A well-structured gap report does not require the reader to understand the technical details of why a finding matters. It explains what the control failure enables—whether that's a class of security vulnerabilities, a compliance gap that would fail a regulatory review, or a delivery risk that has already cost the organization in past projects.

6. Remediation planning and tracking

An audit without a remediation plan is an expensive exercise in technical documentation. After the gap report is reviewed and accepted, the organization assigns owners to each finding and builds a remediation roadmap with realistic timelines and prioritization based on risk severity.

Critical findings should have immediate remediation actions defined, with interim mitigating controls in place while permanent fixes are implemented. Significant findings typically enter the standard project planning cycle with tracked milestones. Observations are reviewed during the next audit cycle.

Remediation progress should be tracked at the leadership level, not just the engineering level. If the executive sponsor isn't reviewing progress on critical findings monthly, the audit's value will erode over time as remediation gets deprioritized in favor of delivery pressure.

7. Verification and re-audit

Remediation is only effective if it's verified. After remediation actions are completed, the audit team confirms that the new controls are in place, documented, and actually followed in practice. This is the step that closes the loop and produces the evidence that regulators, clients, and insurers need to see.

For organizations under continuous compliance obligations, this verification feeds directly into the next audit cycle. A mature organization treats SDLC auditing not as a periodic project but as a continuous process that keeps its development practice aligned with evolving requirements, threat landscapes, and regulatory expectations.

SDLC audit checklist: The essential control areas

While every audit is scoped differently, the following control areas appear in virtually every comprehensive audit. This is a business-level map of what gets examined and why each area matters, not an exhaustive technical checklist:

- Requirements management. Are requirements formally documented, traced through development, and reviewed for completeness before development begins? Gaps here are the most common source of scope creep and late-stage rework.

- Security integration. Is security built into each phase of the lifecycle: threat modeling in design, static analysis in development, and dynamic testing before release? Or is it applied only as a final-phase check? Post-build security remediation costs significantly more than design-phase prevention.

- Code review and quality gates. Are there documented, enforced criteria that code must meet before moving to the next phase? Are those criteria actually applied, or bypassed when deadlines approach?

- Test coverage and strategy. Does the testing process cover the risk surface of the system, including integration, regression, security, and performance testing, or is it limited to functional unit tests? Are test results documented and traceable to requirements?

- Change management. Is there a formal process for approving changes to requirements, code, or infrastructure? Are changes logged, reviewed, and tracked? Uncontrolled change is the single most common source of production incidents.

- Access control and environment separation. Are production environments separated from development and testing? Who has access to what, and is that access reviewed regularly? Excessive access in development environments is a persistent and underestimated risk.

- AI tool governance. Are there documented policies governing the use of AI coding assistants? Do review processes account for AI-generated code? Are there controls over what data is submitted to external AI tools?

- Incident and defect tracking. Are defects and incidents formally logged, prioritized, and resolved with documented root cause analysis? Is that analysis fed back into the development process?

- Deployment and release controls. Are deployments executed through controlled pipelines with defined approval gates? Is there a tested rollback procedure? Is deployment authority formally assigned?

- Compliance documentation. Does the organization maintain the evidence (logs, approvals, test results, access records) that regulators and clients require? Is that evidence current and retrievable?

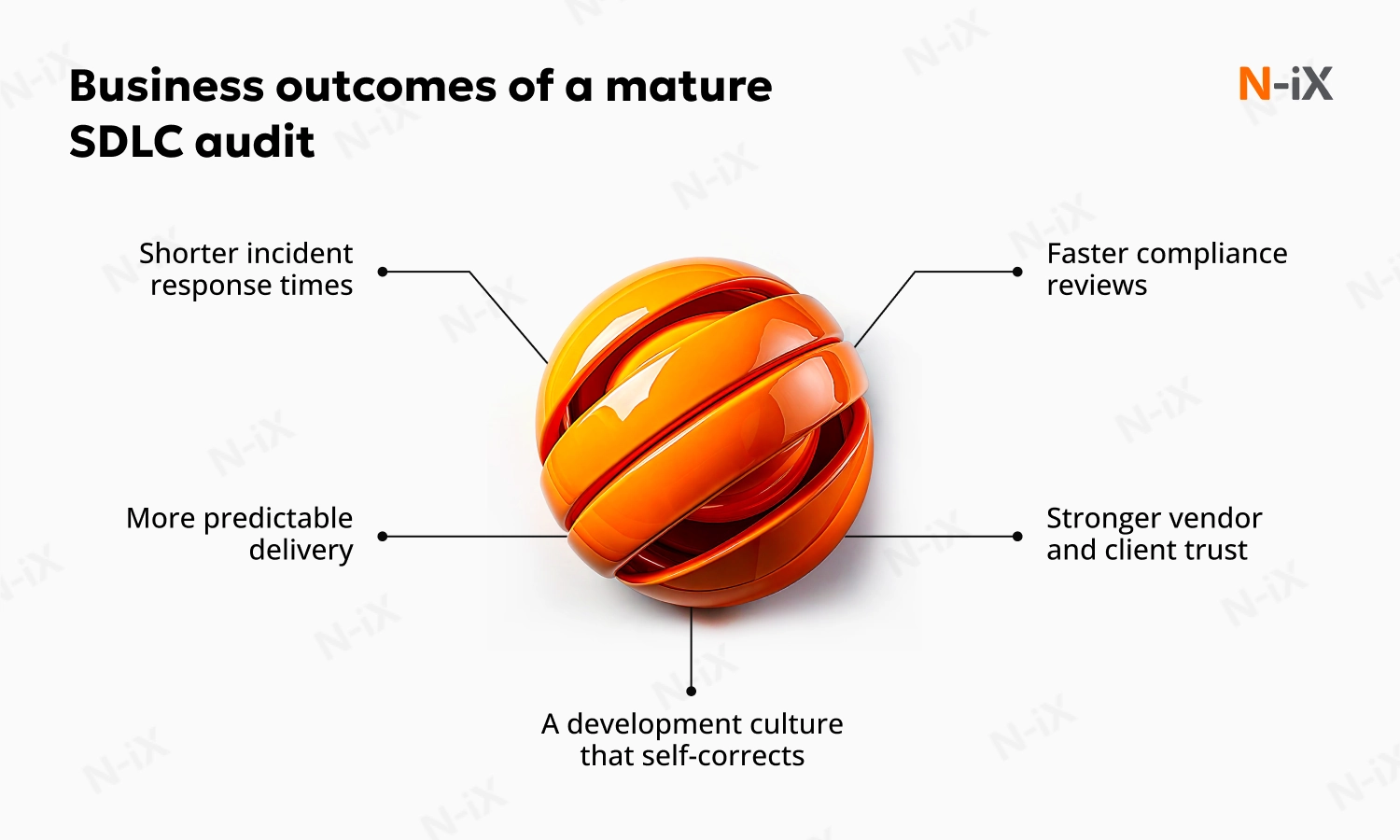

What good looks like: The outcomes of a mature SDLC auditing program

Organizations that audit their SDLC regularly and act on findings consistently don't just have cleaner compliance records. They build delivery organizations that are structurally more reliable.

Shorter incident response times

When the development process is well-documented and controls are in place, the blast radius of a production incident is smaller, and the path to resolution is faster. Teams know what changed, when, and who approved it.

More predictable delivery

Projects that run through a mature SDLC have fewer late-stage surprises because the process gates that catch problems early are actually working. Risks that would otherwise surface during testing or deployment are found and resolved during design and development.

Faster compliance reviews

Organizations with a mature, regularly audited SDLC can demonstrate compliance with a fraction of the effort required by organizations that scramble to reconstruct evidence when a regulatory review is announced.

Stronger vendor and client trust

A documented, audited development process is increasingly a procurement requirement in enterprise software, regulated industries, and government contracting. It's not just a risk control; it's a competitive differentiator.

A development culture that self-corrects

Perhaps most importantly, regular audits normalize the idea that process improvement is a standing expectation, not a crisis response. Teams that operate in that environment surface problems earlier, escalate faster, and make better decisions under pressure. In such a case, the process supports them rather than working against them.

When to commission an SDLC audit

Most organizations should audit their SDLC at least annually. Beyond that baseline, specific triggers should prompt an audit regardless of schedule: before pursuing or renewing compliance certification, after a significant production incident or major delivery failure, before scaling development capacity by adding headcount or new delivery partners, when integrating AI development tools at scale, when a major platform migration is planned, or when entering a new regulatory jurisdiction.

Each of these is a moment when process gaps that were tolerable at a smaller scale become material risks at a larger scale. An audit at the right moment is considerably cheaper than the incident it prevents.

Final thoughts

An SDLC audit is not primarily a technical exercise. It's a question about whether the organization has the process discipline to deliver software that is safe, reliable, and compliant at the scale it needs to operate.

The question is not whether engineering teams are capable—it's whether the process they're working within is structured to make their work visible, reviewable, and improvable over time. In 2026, with AI tooling accelerating both delivery speed and the risk surface it introduces, that question is more consequential than it has ever been.

N-iX has over two decades of experience helping global enterprises audit, restructure, and continuously improve their software development processes. Our compliance certifications, including ISO 27001, SOC 2, PCI DSS, ISO/IEC 27701:2019, FSQS, and GDPR, are built on the same foundations we help our clients establish. Whether you need an independent external audit, a gap analysis ahead of a regulatory review, or a longer-term process transformation, our engineering and DevSecOps teams have the experience to run it and the organizational context to make the findings actionable.

FAQ

How often should an organization conduct an SDLC audit?

At a minimum, annually. Organizations should also conduct audits of SDLC before compliance certification reviews, after significant incidents or delivery failures, before scaling development capacity, when integrating AI tools at scale, and when entering regulated industries or new jurisdictions.

What is the difference between an SDLC audit and a code review?

A code review examines whether specific code is correct, secure, and maintainable. An audit of SDLC examines whether the process that produces code is structured to consistently deliver those properties. Both are valuable, but they answer different questions. An audit is a prerequisite for understanding whether any individual code review is actually reliable.

Who should be involved in an audit of your SDLC?

This type of audit requires input from across the delivery organization: developers, architects, QA leads, security engineers, project managers, and operations teams. Stakeholders responsible for compliance, legal, and risk should be involved in scoping and reviewing findings. Executive sponsorship is critical to ensuring that findings are acted on rather than filed.

What are the procedures for conducting the audit of SDLC?

The core procedures in conducting the audit of SDLC are: define scope and objectives, collect documentation and evidence, conduct interviews and process walkthroughs, assess findings against the relevant framework, produce a gap report with prioritized findings, build and track a remediation plan, and verify remediation effectiveness. The cycle then repeats.

Have a question?

Speak to an expert