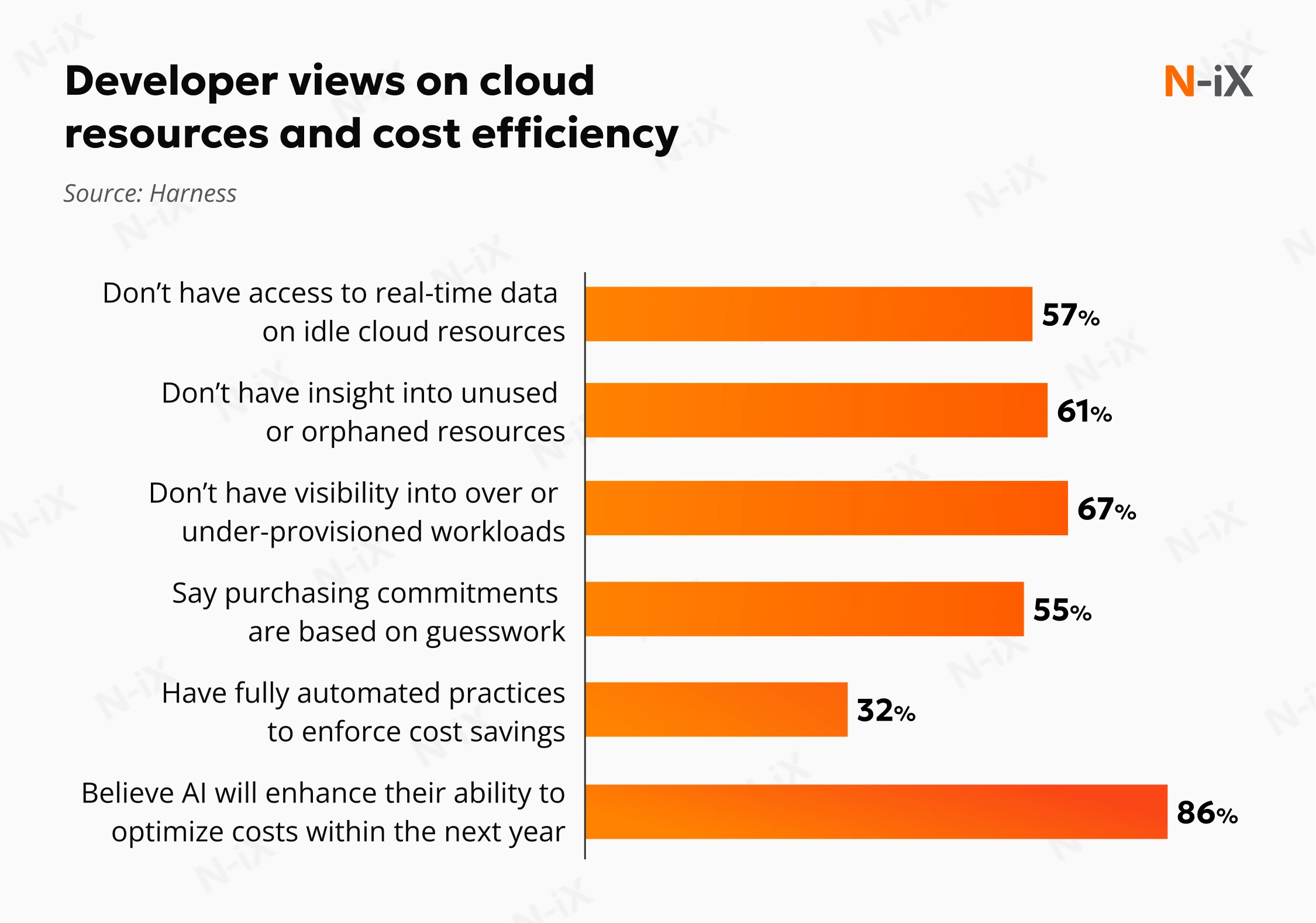

Manual cloud management is costly, both financially and in terms of team productivity. Despite automation advancements, it’s estimated that $44.5B—21% of 2025 enterprise cloud infrastructure spend—was associated with underutilized resources. [1]

Engineers are also losing valuable time: on average, they spend 12 hours a week dealing with incidents and fixing issues that started in the web console. [2] Much of this effort stems from limited real-time visibility, forcing teams to troubleshoot with incomplete data and turning cloud infrastructure management into an expensive exercise in guesswork.

But what if your infrastructure could manage itself? Autonomous cloud management is helping businesses optimize resources, enhance visibility, and improve team productivity. In this guide, we’ll walk you through how autonomous systems work, how to implement them, and what challenges to consider along the way.

What is autonomous cloud management?

The autonomous cloud is an approach to cloud management where AI-enabled systems build, govern, optimize, and heal themselves with minimal human intervention. Unlike traditional setups built on scripts, CI/CD pipelines, and predefined detection rules, such intelligent clouds don’t require constant manual intervention. They continuously learn from real-time data to anticipate issues and dynamically adjust resources.

Some elements of this approach have long been part of cloud platforms. For example, Kubernetes can automatically restart failed workloads or rebalance resources. While these capabilities are useful, they rely on predefined rules and cannot interpret context or adjust decisions as conditions change. AI agents enhance automation by enabling adaptive, context-aware responses.

In most enterprise environments, this autonomy is introduced progressively, with higher levels of automation in development and testing. Critical production changes still require controlled, human-in-the-loop oversight.

The value of this technology becomes clearer when we distinguish between a system that’s merely automated and one that is fully autonomous. They represent two different stages of infrastructure maturity:

- Automated systems operate on hardcoded rules, routines, and initial programming. They execute predictable tasks perfectly but struggle to interpret new signals, distinguish false positives, or adapt to changing conditions.

- Autonomous systems use advanced AI and built-in feedback loops to become context-aware and independent. They continuously learn from historical data and dynamically adapt to handle complex, unpredictable tasks and continuously refine decisions based on prior outcomes.

Traditional cloud management is like driving a manual transmission car. Automated management is cruise control, and the autonomous cloud is a fully self-driving vehicle that can anticipate traffic and navigate detours. Each option has its role depending on the situation.

Such a “self-driving” cloud manages and optimizes itself in four primary ways:

The 4 principles of cloud autonomy

- Self-building: The system automatically provisions and deploys production-ready infrastructure based on natural language requests and application requirements.

- Self-governing: It continuously enforces security and compliance policies, detecting risks and preventing violations before they occur.

- Self-healing: The platform proactively detects anomalies and restores system operations swiftly when faults occur, significantly reducing downtime.

- Self-optimizing: It continuously analyzes usage patterns and dynamically adjusts resources to balance sufficient application performance with maximum cost efficiency.

Discover more applications of Artificial Intelligence in cloud computing

Key advantages of autonomous cloud management

By trusting an AI agent to handle daily operations, you can eliminate costly bottlenecks, reduce human error, and enable more focus on strategic growth. Here are the five key autonomous cloud management benefits our cloud experts highlight:

Significant cost reductions

Autonomous systems help streamline resource usage, significantly lowering cloud costs. They prevent excessive overprovisioning and reduce waste by dynamically rightsizing resources based on real-time demand. AI-driven auto-scaling ensures that you’re only using what you need, when you need it. This enables your organization to reduce unnecessary expenses while maintaining optimal performance.

Time savings and increased agility

Manual Infrastructure as Code already reduces the need for scripting, but it still requires configuration and maintenance. With autonomous management, you can provision production-ready environments in minutes rather than days. Proactive self-healing also reduces mean time to resolve (MTTR) by up to 70%, limiting operational disruption. [3] As a result, your organization spends less time resolving infrastructure issues and more time advancing product and business priorities.

Enhanced customer experience

When your cloud environment can detect issues and recover on its own, users are less likely to experience service disruptions. It applies intelligent self-healing to correct faults in real time and uses dynamic auto-scaling to handle traffic spikes without waiting for human input. This helps ensure steady response times and consistent service quality, even if a server instance goes offline or demand surges unexpectedly.

Safer and higher-quality releases

Every production release introduces financial and operational risk. The autonomous cloud reduces that exposure by generating AI-based scorecards that estimate cost impact, latency shifts, and error probability before deployment. It evaluates changes against defined performance and reliability thresholds, similar to SRE guardrails, and can block or adjust releases that put system stability at risk. In some cases, it also automatically remediates configuration issues.

Employee retention and burnout reduction

Manual cloud operations often rely on constant oversight, after-hours incident response, and repetitive maintenance, leaving little space for personal development. Over time, this pressure can wear down focus and morale. Autonomous management bears much of this operational load by handling routine remediation and stability tasks automatically. It enables your engineers to concentrate on improving existing solutions, optimizing costs, and working on business-aligned initiatives, all of which support long-term engagement.

Autonomous systems also extend their impact beyond technical teams. For instance, in a recent engagement with a financial services provider, N-iX applied AI to automate routine processes, significantly reducing the manual effort required by the client’s teams. By offloading repetitive tasks to AI, we enabled their specialists to focus on more high-value work. This improved operational efficiency and helped alleviate workload pressures, ultimately contributing to better team engagement.

These operational and talent impacts form the foundation of a strong business case for autonomous cloud systems. The next step is understanding how to introduce autonomous capabilities in a structured way.

Getting started with autonomous cloud management: 6 key steps

Shifting to the autonomous model isn’t instantaneous. Rather than overhauling your existing infrastructure, autonomous cloud enablement requires a structured, phased approach that builds capability and trust over time. Here’s a practical framework to guide you in introducing autonomy step by step.

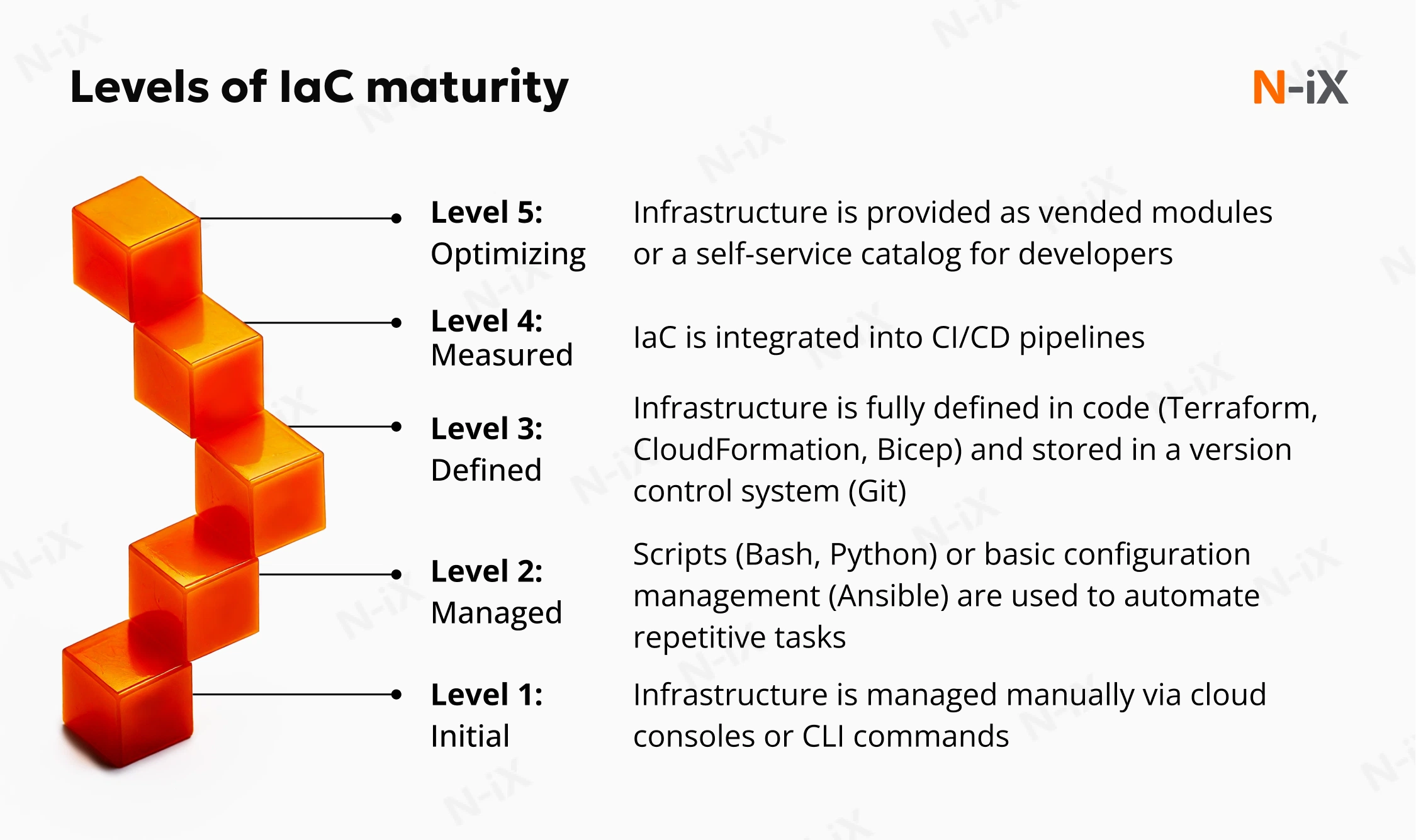

Step 1: Conduct an IaC maturity assessment

Start by evaluating your current Infrastructure as Code (IaC) maturity and identifying operational bottlenecks that slow your progress. Consider both your tech stack and human costs. Look at how much time your team spends on manual provisioning, reactive troubleshooting, and compliance checks. This baseline will help pinpoint the most expensive pain points and make a strong case for transitioning to autonomous cloud operations.

Our experts recommend focusing on the following key areas for assessment:

- Automation coverage: Do your systems only execute predefined workflows, or can they adapt and make decisions based on real-time conditions?

- Provisioning speed: How long does it take to set up and configure new environments?

- Error rates: How often do errors or failures occur, and how much time does your team spend fixing them?

- Compliance checks: How often do you need to manually verify compliance and security requirements?

- Scalability: Can your infrastructure handle increased demand without significant changes to the existing setup?

By answering these questions, you’ll get a clear picture of your IaC maturity and be ready to identify where automation can deliver the greatest value.

Step 2: Establish a governance-first policy architecture

Before enabling self-governing features, set clear boundaries for your AI systems. Establish standards for data protection, regulatory requirements (like EU AI ACT, SOC2, or GDPR), financial limits, and access control based on least-privilege principles. This ensures that when the AI automatically provisions or scales resources, it operates within these strict parameters, mitigating the risk of rogue infrastructure.

Step 3: Integrate with your observability stack

Autonomous systems require rich data to make intelligent decisions. Connect the AI platform to your existing application performance monitoring (APM) tools, DevOps pipelines, and security scanners to gather the necessary input.

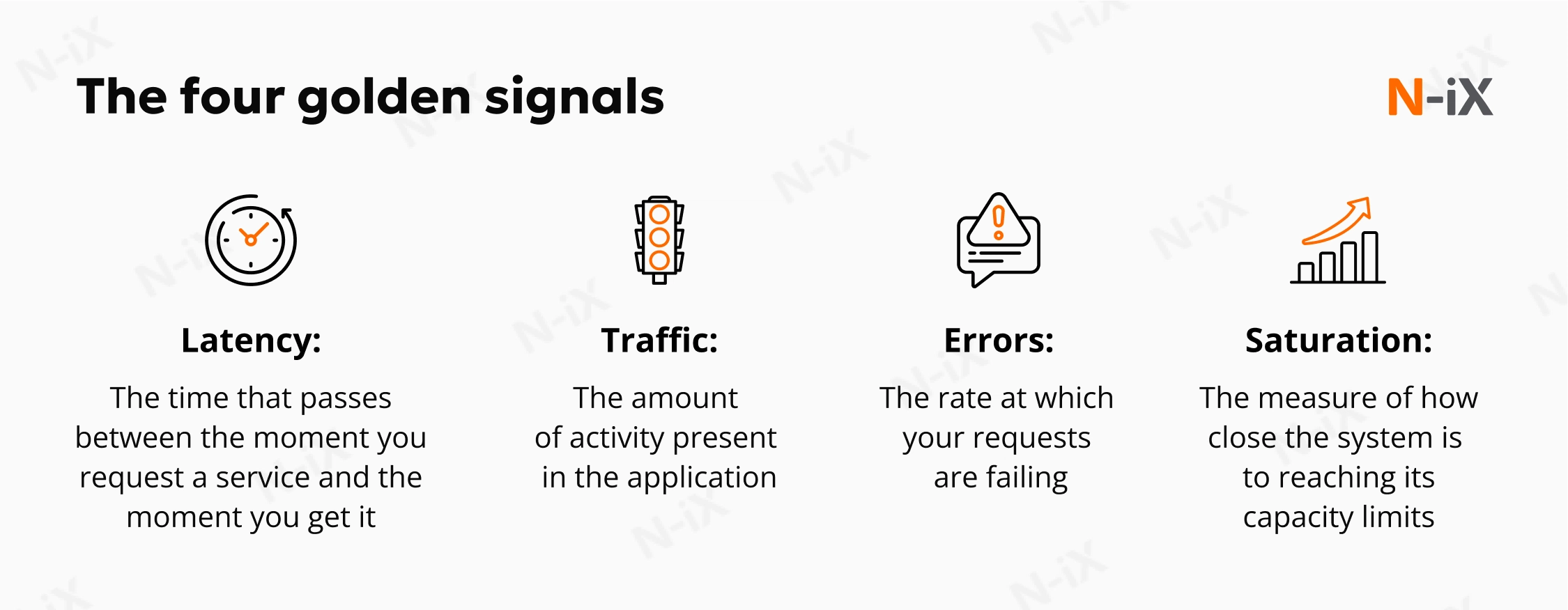

To effectively manage your infrastructure, the system needs access to the golden metrics. It’s a term coined by Google that encompasses latency, traffic, errors, and saturation. These key metrics provide the context for real-time decision-making, enabling the AI to adjust resources, detect issues, and optimize performance. By continuously tracking them, the system learns from feedback loops, ensuring that autonomous cloud management remains aligned with your business goals and operational needs.

Step 4: Start with a pilot

Begin by applying autonomous optimization to non-critical environments or applications to build confidence in the system. This approach lets you test the AI’s self-optimizing features (e.g., rightsizing cloud costs) without risking core production workloads. It’s a low-risk way to assess value and build trust in the platform before expanding its scope.

To ensure a smooth start, focus on the following key elements:

- Low-risk environment: Start with development or non-critical applications to minimize exposure.

- Clear success metrics: Understand what successful implementation looks like before rolling out further. While it can be challenging to set precise KPIs from the start, focus on defining broad indicators, such as improved efficiency, reduced manual intervention, or better resource utilization.

- Team alignment: Make sure your team is prepared and understands how to interact with the new system.

- Proper monitoring tools: Ensure you have the right tools in place to track progress and address any autonomous cloud management challenges as they arise.

Step 5: Activate proactive self-healing and auto-scaling

Once you’ve built confidence in the system, shift from simply monitoring anomalies to letting the AI take action. It can automatically detect and resolve issues such as code drift and incidents, minimizing downtime and reducing manual intervention. The system can also dynamically adjust resource allocation to optimize performance.

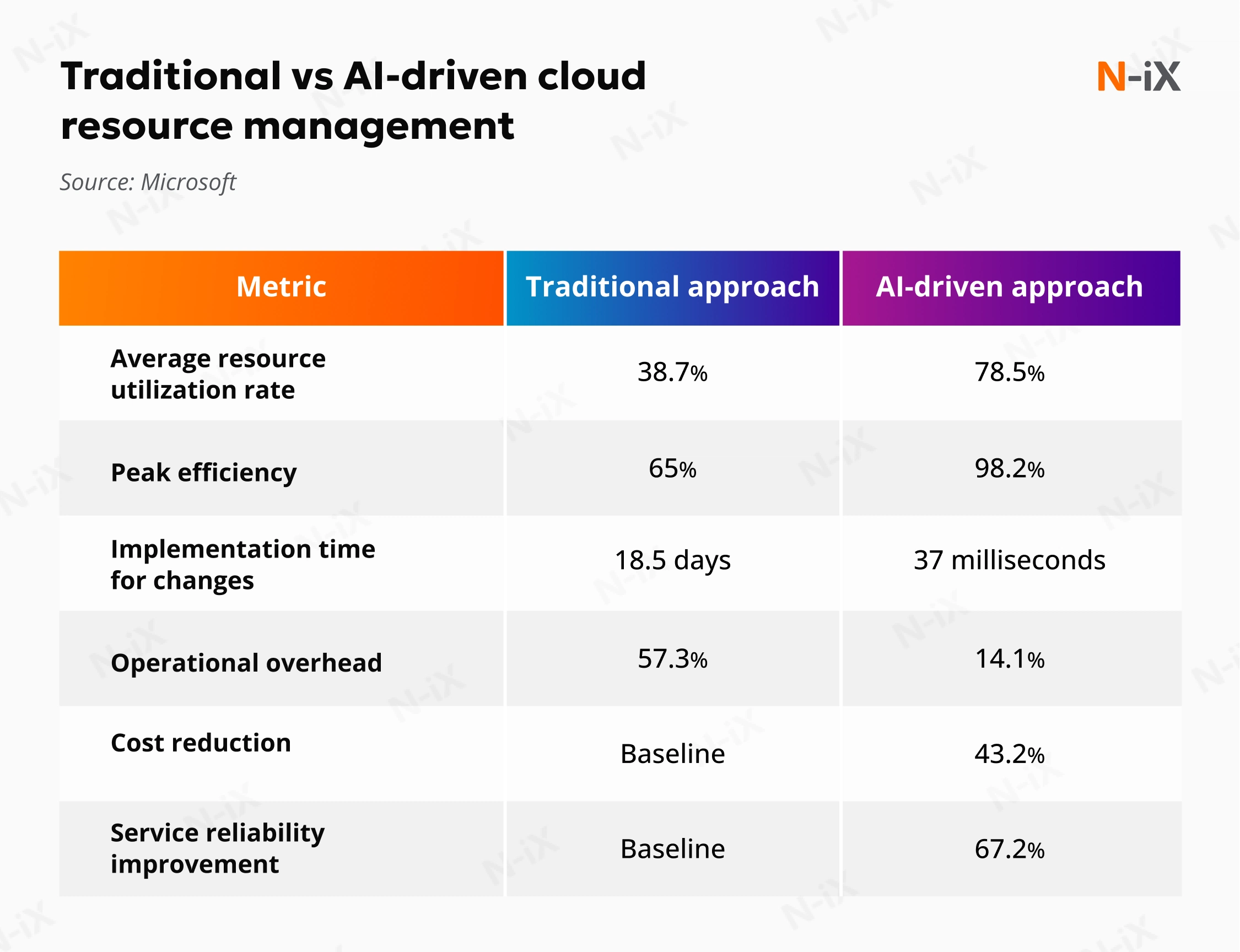

According to Microsoft’s 2025 research, the AI-driven approach achieves a 78.5% resource utilization rate. [4] This indicates that AI systems allocate resources to maximize performance while minimizing idle capacity. In comparison, the traditional approach has an average utilization rate of 38.7%, leaving a lot of resources underutilized.

Step 6: Prepare your teams for the transition

Transform your engineers from manual task “doers” into “orchestrators” of high-level AI agents. This shift requires proper training so your team can interact with the system using natural-language prompts rather than complex code. Training is critical to help your teams trust the new system.

As you move forward, it’s important to recognize that implementing autonomous cloud management comes with its own set of challenges. Learning to overcome technical, security, and operational obstacles will be crucial for a smooth transition to autonomous systems.

Overcoming autonomous cloud management challenges

Moving to independent decision-making introduces new layers of complexity. Organizations need to rethink visibility, guardrails, and operational discipline as decision making is increasingly delegated to systems within defined boundaries. Our experts highlight the following challenges businesses often encounter and explain how to approach them.

Ungoverned AI infrastructure

Implementing AI to manage cloud environments requires clear governance to avoid unintended actions. If an autonomous system operates without defined boundaries, it could provision resources that violate compliance standards or lead to unexpected cloud sprawl and budget overruns.

How N-iX solves it: We design AI systems with governance built in from the start. Each AI agent operates within a clearly defined scope, with limited permissions and cost controls aligned to your policies. Before any action is taken, security, compliance, and budget rules are enforced. The system continuously monitors activity and applies these guardrails, while your teams retain oversight of critical decisions.

Data dependency and quality

Autonomous cloud management relies on Machine Learning models, predictive analytics, and historical data. Consequently, the quality and quantity of data you feed the AI system determine how effective it will be. If the data supplied for processing is biased, insufficient, or doesn’t reflect the full scope of your cloud operations and resource usage, the AI’s performance will suffer. Poor data quality can lead to inaccurate anomaly detection, ineffective resource allocation, or incorrect scaling decisions.

How N-iX solves it: We ensure the autonomous platform integrates with your existing observability stack, such as APM tools, to gather real-time golden metrics and continuously analyze system behavior. This allows the AI to detect patterns, support faster issue identification, and recommend or execute predefined actions within set boundaries. By implementing continuous learning algorithms, we maintain high data integrity and ensure the AI accurately understands your operational baseline.

The need for model interpretability and auditability

As AI assumes responsibility for cloud operations, the black-box nature of these algorithms poses a significant challenge. In enterprise and highly regulated sectors, security and compliance teams require absolute transparency. They must understand exactly why an AI system made a specific decision, such as modifying a network configuration or auto-scaling a resource. Without model interpretability, organizations often struggle to audit the system and validate automated incident response.

How N-iX solves it: We maintain comprehensive logs of all autonomous actions and use frameworks that quantify the AI decision-making process. While the system handles routine complexity, we design the architecture so that limited human oversight is always retained for critical, high-risk operational changes.

Navigating the cultural shift and change management

Transitioning to the autonomous cloud is not just a technological upgrade; it’s a cultural shift for your teams, especially SRE and DevOps. Engineers accustomed to manual IaC scripting need to adapt to new workflows in which AI agents manage provisioning, scaling, and resource optimization. There is often resistance to adoption that stems from a lack of understanding or the friction of changing daily routines.

How N-iX solves it: Engineers need to rethink their roles, moving from routine execution to higher-value work and viewing AI as an accelerator rather than a replacement. We guide your organization through a structured change management process. By starting pilot programs on non-critical workloads, we help you build team confidence and reduce cognitive load. This supports smoother adoption and enables teams to focus on more meaningful outcomes.

Key takeaways

- AI-driven systems effectively automate resource allocation, infrastructure provisioning, and self-healing, improving cloud performance while reducing human intervention.

- Key autonomous cloud management benefits include significant cost and time savings, improved resource utilization, better customer experience, and enhanced employee engagement.

- Governance and data quality are critical for ensuring AI systems make effective, compliant decisions in cloud environments.

- Transitioning to autonomous cloud management requires a cultural shift, helping engineers move from manual task execution to overseeing AI-driven operations.

Why implement autonomous cloud management with N-iX?

With 23 years of engineering experience and a team of 2,400+ professionals, N-iX helps businesses optimize cloud infrastructure management through advanced automation. Our 400+ cloud experts and 200+ AI and data science specialists have successfully delivered over 150 cloud projects, helping organizations automate and scale their infrastructures with precision. We apply AI-driven automation to optimize resource allocation, reduce cloud costs, and improve performance.

If you need to ensure your cloud management is efficient, secure, and compliant, reach out today and let us optimize your infrastructure for maximum performance.

References

- FinOps in Focus 2025 Report—Harness

- 2024 State of DevOps Report—DORA

- AI-driven observability shortens MTTR—Yahoo Finance

- AI-Driven Resource Allocation: Revolutionizing Cloud Infrastructure Management—Microsoft

Have a question?

Speak to an expert