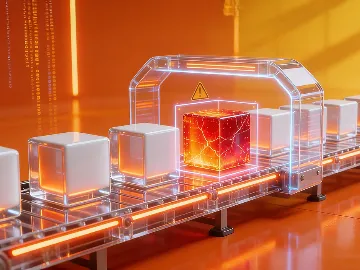

Production errors take time to diagnose. Site reliability engineering improves infrastructure management, but manual teams still can’t resolve issues fast enough to limit business impact. Picture a scenario: it’s 2 AM on a Thursday, and your checkout service is returning errors for an increasing number of customers. Order volume is dropping. Your on-call engineer logs in and starts tracing the issue, eventually finding that a deployment from earlier that day changed how the database handles connections under load. The fix is quick, but the diagnosis takes an hour.

When an incident like this hits, you have two options: wake your engineer up, or let an AI SRE investigate and act before the damage compounds.

Companies report an average of 86 outages per year. When they occur, 70% of large enterprises take more than 60 minutes to resolve them. The overall average, across company sizes and industries, is 196 minutes of service disruption per incident. [1] At this frequency and duration, costs to revenue, customer trust, and engineering capacity add up fast.

The bulk of those 196 minutes is diagnosis, not the fix itself. AI-driven SRE significantly shortens that window through automated detection, faster root cause analysis, and, in some cases, autonomous remediation. This guide explains how AI augments SRE, what makes it effective, and what practical implementation steps you can take to maximize return on investment.

What is an AI SRE?

An AI site reliability engineer is an AI-driven system that takes on the detection and response work of a human SRE team. It monitors your systems, connects signals across logs, metrics, and traces, and understands when something is off. What sets it apart from traditional SRE is autonomy. Rather than alerting an engineer who then investigates and acts, AI agents detect anomalies and resolve issues without any human intervention.

N-iX’s senior tech expert puts it this way:

AI SRE is constantly on watch to guard your system. It can stop a risky release before it reaches production and step in the moment something breaks. You still stay in control, but instead of investing significant time and effort, you review actions that have already been taken to protect the system.

Agentic SRE typically works in two directions:

- Pre-release prevention: AI evaluates code changes, pipeline signals, and current system health. If reliability targets are at risk, it can pause or block deployment before the issue reaches production.

- Remediation in production: When something fails, AI investigates the event and identifies the most likely cause. It can restart containers, bring up the service, and save the error budget.

An error budget is a core SRE metric that defines your maximum allowable downtime or errors over a set period. For instance, your service runs at the 99.9% uptime target, measured over a full 30-day month. That gives you approximately 43 minutes of allowable downtime per month. Rafal notes that organizations use up most of their error budget when they have people on call 24/7. They need to wake up. They need to analyze. They need to check. All the while, AI can act swiftly, even in production, ensuring faster, well-documented remediation and protecting business continuity.

This level of automation keeps your teams in the loop while allowing the loop to move faster. It represents capabilities that span the full reliability lifecycle, from pre-release checks to post-incident learning.

Key AI SRE capabilities and why your business needs them

Software releases are faster and more frequent than a decade ago, and the delivery pace keeps increasing. DevOps has initially shortened the cycle; AI is now cutting it further (explore the differences between DevOps and SRE). At the same time, more code, more updates, and more deployments all mean more chances for failure. SRE automation with AI is what ensures that pace doesn’t come at reliability’s expense. Let’s explore its core capabilities and what they mean for your operations.

1. Release risk prevention

Releases that eventually cause outages often clear every checkpoint. The code review passes, staging looks fine, and the deployment goes out. What nobody checked was whether the system was ready for it.

That’s the gap an AI SRE agent closes. Before a release goes out, the system evaluates:

- Code changes and their reliability risk;

- Current production health and service conditions;

- Remaining error budget, and whether there’s enough headroom to ship.

If any of these signals are off, the agent can flag it, recommend a hold, or block the deployment outright, depending on how your policies are set up. This is the earliest and least expensive point where you can prevent an outage. Instead of discovering reliability problems after customers feel them, you can proactively reduce the number of bad releases that reach production.

It also makes your error budget a useful decision-making tool. When the AI analyzes it at deployment time and can act on it, the budget becomes a mechanism for balancing innovation speed with reliability. It enables safer release velocity, better protection of SLA commitments, and fewer production incidents.

2. Automated root cause analysis (RCA)

When an incident hits, the damage accumulates during the 30 to 60 minutes it takes to figure out what caused it. Diagnosis is where most of the downtime cost actually originates.

AI SRE compresses it. The system correlates logs, distributed traces, metrics, recent deployments, and dependency health, then surfaces the most probable cause with supporting evidence. Your engineers get a prioritized hypothesis to work from, not an open-ended search.

Multi-agent AI systems applied to Kubernetes environments produced a 45% reduction in mean time to repair (MTTR) compared to traditional observability baselines. [2] In industrial settings, combining ML with automated remediation brought that figure to 67%. [3]

Faster diagnosis also streamlines what happens after the incident closes. Post-incident reports, SLA documentation, and customer communication all require a clear account of what occurred and why. When AI leads the investigation, the documentation builds alongside it as the incident unfolds. By the time the issue is resolved, the record is already available.

3. Incident response and auto-remediation

Most first-line fixes aren’t complicated. You simply restart a service, roll back a deployment, or reroute traffic. What slows them down is the gap between the alert firing and an engineer acting on it, at whatever hour that happens to be.

With agentic AI SRE, the first- and second-line responses run automatically, without waiting for an engineer to log in. It operates on a least-privilege, just-in-time basis, requesting only the access it needs for that specific incident and releasing it once the task is done.

No one reacts faster, no one investigates faster than AI. The on-call engineer can just log in, check if everything is fine, and go back to sleep.

The level of autonomy you grant the AI system depends on your business priorities. You can run AI in review mode, where it proposes a fix and waits for an engineer’s sign-off before acting. However, when every minute of downtime carries a measurable cost, you can configure the agent to act and document rather than wait. In both cases, every action is logged and can be rolled back.

Explore 13 best practices for overcoming AI cybersecurity risks

4. Intelligent monitoring and anomaly detection

Most cloud monitoring setups are built around thresholds: if a metric crosses a fixed line, an alert fires. The problem is that thresholds don’t know context. A CPU spike during a scheduled batch job looks identical to the one during peak traffic. Your engineers get notified about such an event and have to decide whether it matters. At the same time, a slow memory leak that never crosses any threshold goes unnoticed until it takes down a service.

AI for SRE learns what “normal” looks like for your specific systems, accounting for time of day, traffic patterns, and recent deployment history. It identifies real anomalies rather than every threshold breach, which means fewer false positives and a better signal-to-noise ratio overall.

That distinction has an important operational consequence. Alert fatigue compounds over time. When engineers are regularly notified of conditions that resolve on their own, they start to hesitate before acting. A monitoring system with a high false positive rate gradually erodes trust in the alerting process. And the more desensitized your teams become, the slower they will react when something genuinely fails.

Read more: How to choose an AIOps platform for enterprise

5. Continuous learning and operational memory

Once an incident closes, your team knows something they didn’t know an hour ago: how a specific failure happened, what it took to diagnose, and what finally resolved it. That knowledge lives in someone’s head and, without a deliberate way to capture it, fades when the person moves on.

Systems designed to preserve the full investigation path solve this at the source. Each incident adds to a shared record of how your infrastructure behaves, which is available the next time a similar situation arises. New team members inherit context rather than starting from zero. Also, recurring failures get recognized as patterns, not treated as fresh emergencies each time.

With Artificial Intelligence, you can encode senior expertise into a reasoning system. The knowledge stays accessible, even if the responsible engineer leaves.

AI is a pragmatic tool: it delivers according to the level of expertise behind it. Without that foundation, it becomes difficult to measure and govern. To make implementation effective and secure, you need a phased approach guided by engineers who understand both the technology and your specific infrastructure.

How to get started: A roadmap for AI SRE implementation

A well-structured roadmap moves in stages, each building on the results obtained before expanding scope. Our experts highlight the following five implementation stages, from initial assessment to ongoing operations:

Stage 1: Conduct the Discovery Phase

Before selecting tools or defining scope, you need a clear picture of where you stand. Undefined baselines and observability gaps often produce results that are hard to measure (and even harder to trust). Our experts highlight several key steps to take during this stage:

- Audit your observability stack: Check your logging, metrics, and distributed tracing for coverage gaps. AI tools require significant amounts of high-quality data for training. Without it, outputs will be unreliable regardless of what you build on top of the system.

- Map your current SRE practices, toolchain, and team structure: Document how incidents are handled now, what tools are in use, and who owns what.

- Identify your biggest reliability pain points: Look at where incidents cluster, where MTTR runs longest, and where on-call load concentrates.

- Define your baseline metrics: Record your current MTTR, alert volume, on-call incident frequency, and SLA compliance rate before introducing any changes.

Stage 2: Define your strategy before you build

Implementation decisions are easiest to make at the planning stage, before any configuration is in place. This covers agent architecture, access control, automation scope, and governance structure. A few key points to address while designing your strategy include:

- Decide on agent architecture early: Per-service agents offer tighter security and least-privilege isolation; platform-level agents are easier to govern centrally. The right choice depends on your security posture and how your teams are structured.

- Align on governance: Agree on how agents are authenticated, who approves access expansions, and what the escalation path looks like if automation reaches its limits.

- Define the automation ladder: Map out where AI recommends actions for human review, where it executes with sign-off, and where it acts on its own.

- Define the access model before deployment: Every agent action should operate on least-privilege, just-in-time permissions tied to a specific incident or task. Role-based access control and SSO create the governance layer that makes this manageable at scale.

- Involve the business, technology, and security teams: Decisions made without any of these perspectives tend to create problems for the others.

Stage 3: Start with toil elimination and low-risk automation

Early wins build the confidence needed to go further. Starting an AI-driven SRE transformation with high-toil, low-risk operations lets you show clear results quickly and establish the trust needed to expand scope. Here’s where to start:

- Pick one or two targets: Alert noise reduction, automated runbook execution, and incident triage routing are common first targets. They deliver measurable results with minimal risk to production systems.

- Start in recommend mode: Let the system surface suggestions without acting on them. Engineers get familiar with how it reasons, and you get a natural feedback loop for calibrating where autonomous action makes sense next.

- Measure before you expand: Track changes to MTTR, alert volume, and on-call load against the baselines you set during Stage 1. Those numbers are what make the case for moving further.

Stage 4: Expand autonomy incrementally

AI SRE capabilities should expand in a deliberate sequence, driven by risk level and demonstrated performance at each stage. The automation ladder from Stage 2 defines this framework. Here’s how that progression typically looks:

- Follow the automation ladder in order: Start with recommendations, move to approval-gated execution, then expand to autonomous action only for patterns the system knows well. The typical sequence is:

- Recommendation and triage;

- Anomaly detection and RCA;

- Approval-gated remediation;

- Autonomous remediation for known patterns;

- Pipeline-level prevention and release gating.

- Tie each expansion to results: Before moving to the next level, confirm measurable improvement against the baselines from Stage 1.

- Keep third-line incidents human-driven: Novel, complex, or potentially cascading failures require human judgment. That boundary stays firm regardless of how capable the system becomes.

Stage 5: Institutionalize and continuously improve

By this stage, the system is operational. The work that follows is about improving accuracy and turning each incident into lasting organizational knowledge. A few practices worth building into your regular operations include:

- Treating agents as software products: Schedule regular reviews to assess accuracy, catch outdated instructions, and incorporate feedback from your engineering team.

- Establishing a feedback loop: Have engineers review AI-generated decisions, flag incorrect actions, and provide feedback for improvement.

- Making every AI action traceable: Preserve the investigation path, remediation steps, and RCA for each incident. This serves both post-incident review and compliance requirements.

- Building expert SRE knowledge into the AI system: Document how your senior engineers approach diagnosis and remediation, then integrate that reasoning into agent instructions and skills.

- Revisiting your ROI at 6 and 12 months: Compare current MTTR, alert volume, and on-call load against your Stage 1 baselines to identify what works well and what needs attention.

How N-iX can help you implement pragmatic AI SRE

SRE and AI capabilities work within your existing systems. Integrating, governing, and calibrating them requires equal experience across cloud operations, DevOps, and AI development.

N-iX is a global technology partner for Pragmatic AI Software Engineering with 23 years of industry experience and 2,400 professionals on board. Our teams include 200 data and AI engineers, 400 cloud specialists, and 70 DevOps engineers with hands-on experience in reliability, automation, and infrastructure operations. On the platform side, N-iX holds the AWS AI Services Competency, Microsoft Azure AI Platform specialization, and Premier Status within the Snowflake AI Data Cloud Services Partner Program.

If reducing toil, shrinking MTTR, and building a reliable agentic SRE practice are on your roadmap, reach out to our team. We’ll help you assess where AI fits your current environment and define a practical path to get there.

References

- The State of Resilience 2025—Cockroach Labs

- Multi-Agent Human-AI Systems with Low-Code Platforms Enabling Adaptive Web Services and Real-Time Anomaly Remediation in Distributed Architectures—Nazmunisha N. via Preprints

- Proposal for a Sustainable Model for Integrating Robotic Process Automation and Machine Learning in Failure Prediction and Operational Efficiency in Predictive Maintenance—Applied Sciences, Volume 15 (2025)

Frequently Asked Questions

What is an AI SRE’s role in incident management?

It handles the response so your engineers can handle development. For L1 and L2 incidents (service restarts, traffic rerouting, rollbacks), the AI acts faster than any person can. For anything more complex, it escalates with context already assembled: what happened, what it tried, and what it discovered.

What are the types of incidents AI SRE can resolve?

Anything with a known resolution path. Resource saturation, failed deployments, service timeouts, misconfigured load balancers, or pods stuck in a crash loop. If there’s a runbook for it, AI can execute it. Complex, multi-system failures with unclear root causes are left with your engineers.

Does every company need agentic SRE, or just large enterprises?

Any organization running production systems can benefit from it. The risk isn’t that AI does too much; it’s that you don’t understand enough to know what to ask for. For example, if you haven’t defined your SLOs, you won’t know what thresholds to set. The foundation matters.

Isn’t letting AI act autonomously in production too risky?

Actually, in many ways, AI is more accountable than humans. It leaves a complete audit trail, acts consistently, and can be rolled back. The key is tiered autonomy: AI SRE handles L1/L2 scenarios it’s been trained for and escalates everything else. You wouldn’t let a junior engineer have root access on day one, and you should apply the same logic to your AI.

What happens to my existing SRE team?

They get their careers back. An AI SRE agent removes the toil so senior engineers can do what they’re actually good at: designing resilient systems, fine-tuning the AI, and solving problems that require genuine expertise. The goal is to let developers develop and let experts be experts again.

How do I handle security and access control for AI agents in production?

Least privilege and just-in-time access are the governing principles. An AI SRE should request only the permissions it needs for the specific task, tied to a specific incident. When the task is done, access is revoked.

Do I need a backup AI system in case my primary AI goes down?

Generally, no. Your primary insurance is your human team, not a second AI. Redundant AI stacks are expensive and often not business-effective.

Have a question?

Speak to an expert